What bkit is, in one paragraph

bkit is a

Claude Code plugin that adds a methodology layer on top of the CC

harness. Concretely: 39 Skills, 36 Agents, 21 hook events, 2 MCP

servers, and a PDCA (Plan-Do-Check-Act) state machine with 20 guarded

transitions. It is not a rewrite of Claude Code or a replacement for

it — it installs into CC via /plugin install bkit and hangs off every

extension point CC already exposes. The source is open on GitHub. If you agree with the thesis that

workflow around a model matters more than the model itself, bkit is an

opinionated implementation of that workflow.

The short version: you type /pdca plan user-auth instead of "please

write a plan for user auth," and from there every phase becomes a state

transition with acceptance criteria, not a free-text prompt.

The four building blocks

bkit is assembled from four primitives Claude Code already understands, but composed into a methodology rather than left as raw parts.

Skills are reusable prompts exposed as slash commands. Each has a

trigger vocabulary (multilingual), a phase gate (which PDCA stage it

belongs to), and a set of allowed tools. /pdca, /control,

/enterprise, /starter, /dynamic are all skills. A skill's

frontmatter declares its interface:

---

name: pdca

triggers: [pdca, plan, design, analyze, report, 계획, 설계]

allowedTools: [Read, Write, Edit, Bash, Task]

classification: Workflow

phaseGate: all

---Agents are role-based subagents with per-role model assignments.

cto-lead runs on Opus for architectural decisions. gap-detector

runs on Sonnet for cheap, fast comparisons. code-analyzer is

read-only. Each agent defines its own memory scope, max turns, and

disallowed tools — so "review the design" and "critique the

implementation" are not the same model call with different prompts,

they are different agents with different capabilities and cost

profiles.

Hooks intercept lifecycle events. 21 events across 6 layers:

SessionStart, PreToolUse, PostToolUse, UserPromptSubmit,

TaskCompleted, PreCompact, and more. bkit uses hooks for context

injection (so every session starts with PDCA state in scope), audit

logging, destructive-op blocking, and token ledger accounting.

MCP servers (two of them) expose structured tools so the model

does not reason about state from memory alone. bkit-pdca offers

bkit_pdca_status, bkit_plan_read, bkit_design_read,

bkit_metrics_get. bkit-analysis offers bkit_gap_analysis,

bkit_code_quality, bkit_regression_rules. They read from durable

files in .bkit/state/, so session restarts never lose PDCA state.

The composition is what makes it work. Skills expose verbs, agents execute them, hooks guard the edges, MCP servers persist state. No single piece is exotic; the leverage is in how they fit together.

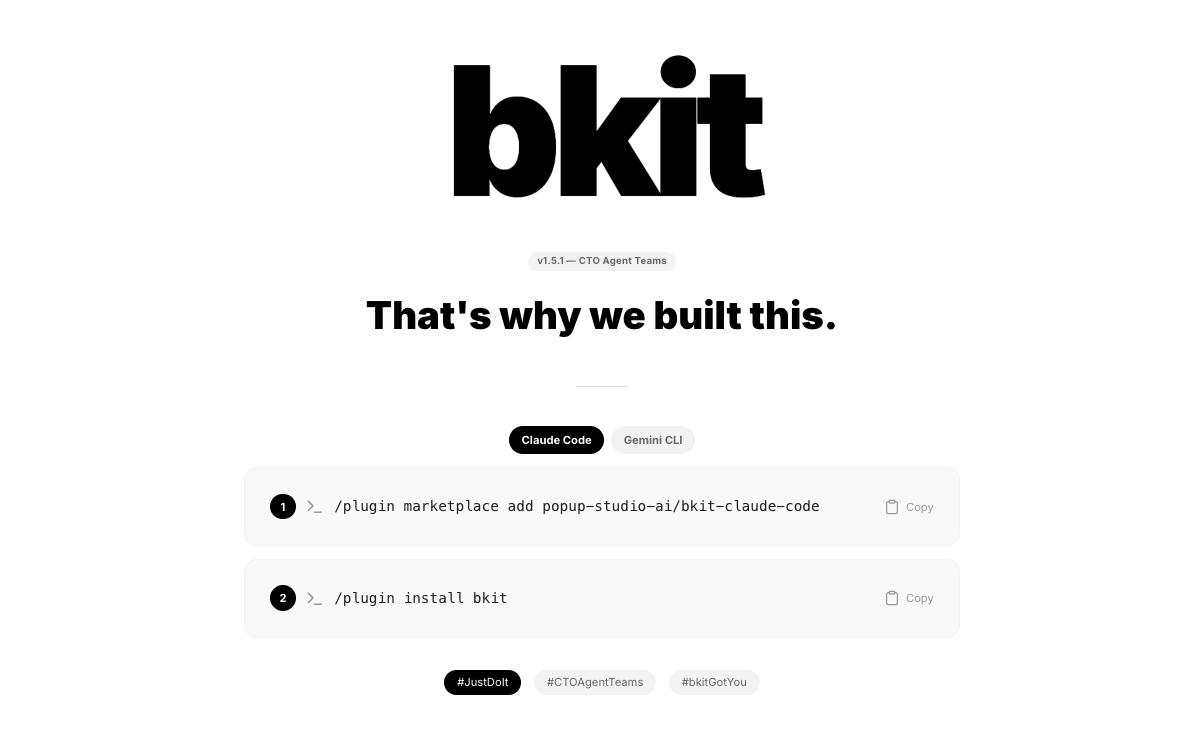

Install and first run

Assuming you already have Claude Code installed:

/plugin marketplace add popup-studio-ai/bkit-claude-code

/plugin install bkit

/output-style bkit-learningThree commands. The first registers bkit's marketplace source, the

second installs the plugin (Skills + Agents + Hooks + MCP servers +

output styles — all wired at once), and the third picks an output

style that teaches you as you go. There are four output styles

shipped: bkit-learning, bkit-pdca-guide, bkit-enterprise, and

bkit-pdca-enterprise. bkit-learning is the gentle one, recommended

for your first project.

After install, /bkit help lists what is available. A typical

session opens with bkit injecting the current PDCA state into context

via the SessionStart hook — so Claude knows where you were in

which feature without you retyping it.

The PDCA state machine in action

The flagship user-facing command is /pdca. It moves a single feature

through seven phases, each a guarded transition with its own

acceptance criteria:

/pdca pm user-auth # requirements -> PRD

/pdca plan user-auth # plan doc with acceptance criteria

/pdca design user-auth # 3 architectural arcs; pick one

/pdca do user-auth # implementation guided by the design

/pdca analyze user-auth # gap-detector: design vs impl match-rate

/pdca iterate user-auth # auto-fix until match-rate >= 90%

/pdca report user-auth # completion doc with metricsAt each phase, bkit writes a document to disk:

docs/00-pm/user-auth.prd.md, docs/01-plan/user-auth.plan.md,

docs/02-design/user-auth.design.md, and so on. These are not

throwaway artifacts — they are the ground truth that later phases

reference. When /pdca analyze runs, it literally diffs the design

doc against the implementation diff and produces a match rate.

Along the way there are five interactive checkpoints, each a small pause with a structured question:

- CP1 (after PM analysis): do the requirements match what you meant?

- CP2 (after plan): acceptance criteria look right?

- CP3 (after design): three arcs drafted — which one?

- CP4 (before do): scope fits the design?

- CP5 (after analyze): ship, iterate, or rework the design?

The checkpoints are the human-in-the-loop safety valve. You do not get steamrolled; you get asked. In L0 or L1 automation, checkpoints are mandatory. In L2+, the routine ones auto-confirm and only the key ones stay human-gated.

For a first-time user, the full flow of a small feature takes about ten minutes of typing and fifteen of the agent actually working. What comes out is four documents plus implementation plus metrics — not just code.

Quality gates and auto-iterate

The crucial agent in this cycle is gap-detector. When /pdca analyze runs, gap-detector:

- Reads the design doc (

docs/02-design/user-auth.design.md). - Walks the implementation diff for the feature.

- Lines up design intent against implementation reality.

- Produces a structured match rate (0–100%) and a list of specific gaps.

The threshold is 90%. Below that, /pdca iterate kicks off a loop:

read gaps, patch each gap via an implementation agent, re-run

gap-detector, repeat. The loop is capped at five iterations. If it

still fails after five, bkit stops and asks a human — it does not

silently ship a 60% match.

This is the Evaluator-Optimizer pattern from the multi-agent literature: two roles, the generator and the critic, where the critic has an explicit spec (the design doc) to compare against. What makes this beat a bigger single-pass model is boring: most failure modes come from the generator forgetting a constraint the design doc made explicit. A second pass with the critic noticing "you never wired the rate limiter" is a cheaper fix than a smarter single-shot generator.

The same gap-detector pattern shows up in bkit_regression_rules —

eight modules across the cc-regression library that detect

reintroduced bugs after a CC or model upgrade. Same idea, different

target.

L0–L4 automation and the trust score

One bkit detail that surprises new users is the automation level system. Your current level shows at the top of every session, and you can inspect it at any time:

/control status

# Level: L2 (Semi-Auto)

# Trust Score: 0.78 (23 PDCA cycles, 91% avg match-rate)

# Routine transitions auto · key decisions gated

# Next escalation: L3 available at Trust Score >= 0.85The five levels:

- L0 Manual — every action requires explicit approval. Useful for a first session where you do not trust the agent yet.

- L1 Guided — routine actions proceed; every checkpoint is mandatory.

- L2 Semi-Auto (default) — routine checkpoints auto-confirm; key ones (CP3 design selection, CP5 ship/iterate/rework) stay human-gated.

- L3 Auto — most transitions automatic; only destructive operations and level escalations are gated.

- L4 Full-Auto — fully autonomous PDCA cycles. Reserved for well-scoped features where you have repeatedly proven the loop works for you.

Graduation is not free. The trust score is a weighted function of your track record: completed cycles, average match rate, destructive-op count, interrupt frequency. It escalates slowly and comes with a cooldown so a lucky streak does not unlock autonomy before the system has seen you work.

/control level 3 escalates; /control level 0 always works as a

panic brake. The intent is that automation is earned per project,

not set once in global config.

Extending bkit + when to use it

Everything in bkit is overridable. Drop a pdca.skill.md into

.claude/skills/ in your project and bkit's priority chain picks

yours first. The resolution order:

| Priority | Location | Role |

|---|---|---|

| 1 (highest) | .claude/skills/*.skill.md | project override — repo-committed, team-shared |

| 2 | ~/.claude/skills/*.skill.md | user defaults — personal, cross-project |

| 3 (lowest) | {plugin}/skills/*/SKILL.md | bkit shipped defaults |

The same override chain applies to agents, hooks, templates, and

output styles. You can ship a team-specific qa-lead with your own

KPIs, or swap out cto-lead entirely. The skill-create command

walks you through authoring a new skill interactively.

pm-lead-skill-patch is an example of non-invasive extension — it

hooks into pm-lead's Phase 4 without editing the upstream file.

So when should you reach for bkit versus raw Claude Code? A rough heuristic:

- Small scripts / one-off fixes — raw CC is fine. PDCA overhead is not worth it for a ten-line change.

- Anything with a design intent you might forget by Thursday — bkit starts paying off at the design doc.

- Features that cross multiple files or require review — gap-detector is where the real leverage is.

- Team projects — the persistent

docs/artifacts (PRD, plan, design, report) become shared ground truth rather than private chat logs that evaporate when the window closes.

The five-line summary:

- bkit is a Claude Code plugin that encodes PDCA, quality gates, and graduated automation into reusable commands.

- Install is three commands; the first session teaches itself via

the

bkit-learningoutput style. - The

/pdcaflow takes one feature from requirements to completion with documents at every step. gap-detector+ 90% match-rate + max-five iterate is the core quality loop.- L0–L4 automation lets you graduate trust per project, not flip a global switch.

If Article 1 argued that

workflow beats model choice, this is the concrete shape of that

workflow. Install it, run one /pdca cycle on something small, and

decide for yourself whether the methodology layer is worth it.

Related reading: